What Temperature Should Your Data Center Be?

Over the past 5 years, the average server rack density increased from 5kW to 8-10kW, prompting temperature rises in the data center. These temperature increases can become a major issue if not handled properly.

Maintaining the correct temperature is extremely important because nearly 30% of unplanned data center outages are caused by environment issues. Not monitoring and analyzing your environment can have a negative impact on energy and resource efficiency. By implementing an environmental monitoring system, you can closely monitor and maintain temperature, humidity, airflow, and other conditions to improve energy costs and equipment lifespan.

Keeping temperatures stable can be quite expensive and a large concern for data center managers. About 50% of the power used in a data center goes towards cooling and it can be easy to over or under cool equipment and waste resources. As energy efficiency and sustainability become top objectives, data center managers need to optimize cooling and keep temperatures steady.

What Is the Right Data Center Temperature?

In order to keep equipment cooled efficiently without overheating, data centers must operate within a certain temperature range.

The American Society of Heating, Refrigerating, and Air-Conditioning Engineers (ASHRAE) offers the most widely accepted guidelines for data centers. In the most recent Thermal Guidelines for Data Processing Environments, ASHRAE provides a recommended range of 64-81°F or 18-27°C and an allowable range of 59-90°F or 15-32°C.

For high density servers, ASHRAE offers a recommended range of 64-72°F or 18-22°C and an allowable range of 41-77°F or 5-25°C.

These guidelines are for the intake of each server. The exhaust temperature should be at least 35°F or 20°C warmer than the intake.

Key Factors That Influence Data Center Temperature

The temperature in a data center may be influenced by various factors, including:

- Type of equipment. Each piece of equipment may have a different temperature tolerance which needs to be weighed when deciding where to place equipment.

- Design and layout. Rack density, airflow patterns, and the overall arrangement of equipment affect how heat is distributed and dissipated.

- Cooling system efficiency. The effectiveness of your cooling system may impact how you set temperature set points and how stable temperatures are.

- Airflow management. How racks are arranged and how well bypass airflow is reduced can impact temperature distribution.

- Efficiency goals. Sustainability objectives are common, and they must be balanced with the need to cool equipment sufficiently.

- Location and climate. Data centers in colder climates may utilize free cooling while those in warmer climates typically require more mechanical cooling. Cooling requirements may change seasonally.

- Utilization and workload. The level of server utilization can impact the amount of heat generated, which requires more robust cooling solutions to maintain ideal temperatures.

- Future expansion. Forward-thinking temperature management must consider the evolving landscape of IT equipment and potential changes in heat generation.

- Regulatory and compliance requirements. Some industries and regions have specific requirements regarding data center environmental conditions.

Why Is Data Center Temperature Monitoring Important?

The key reasons why data centers should maintain the proper temperature include:

- Equipment reliability and lifespan. Most data center equipment is designed to operate within a specific temperature range. Hot spots can cause increased wear and tear that can lead to component failures. Consistent temperatures in the optimal range help extend the lifespan of equipment, reducing the frequency of failures and the need for premature replacements.

- Energy efficiency. Cooling a data center consumes a lot of energy. Maintaining temperatures within the recommended range prevents overcooling which wastes energy and money.

- Compliance with standards. Adhering to manufacturer or industry guidelines helps ensure that meet best practices and regulatory requirements.

As such, utilizing environmental sensors and monitoring software is critical to mitigate risks in the data center.

Where Should Temperature Sensors Be Placed in a Data Center?

ASHRAE recommends mounting six temperature sensors in each rack: one at the top, middle, and bottom of both the front and back.

How to Monitor Data Center Temperature

Once you have installed temperature sensors in your data center, Data Center Infrastructure Management (DCIM) software should be used to monitor your environment in real-time.

DCIM software automatically collects the data from temperature sensors and provides actionable insights that improve uptime and efficiency.

A modern DCIM solution makes data center temperature monitoring easy with:

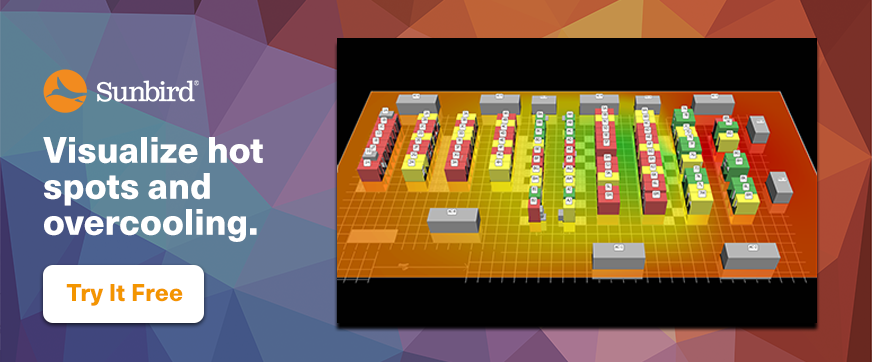

- Thermal map time-lapse videos. Visualize the formation of hot spots with a 3D digital twin of your data center to quickly address the issue.

- Thresholds and alerts. Set warning and critical temperature thresholds and receive automatic alerts when a temperature sensor is outside of your chosen range. This helps prevent overcooling which wastes energy and money. For every degree of upward change in the baseline temperature, you can save up to 4% in energy costs.

- ASHRAE cooling charts. Chart all your cabinets on a psychrometric cooling chart to see at-a-glance exactly which cabinets are outside of your thermal envelope.

- Track and trend KPIs. With out-of-the-box dashboard charts, you can effortlessly track temperature trends, temperature per cabinet, Delta T per cabinet, cooling capacity, and more. This lets you spend less time gathering data and performing manual math and more time optimizing your data center.

Case Study: Vodafone Uses DCIM Software for Temperature Monitoring

In one real-world example, Vodafone struggled with knowing the temperature of their data center to understand if they were able to raise set points in order to save energy costs.

Andrew Marsh, the Senior Manager for Infrastructure and Data Centers for Vodafone in the UK, said that they “wanted to gain insight regarding power usage, cooling, and data and power connections,” and needed a tool that provided visualization, an easy-to-use dashboard, a comprehensive asset inventory, and in-depth reporting capabilities.

Vodafone increased their amount of temperature sensors, going from 16 to 800 in a single location, and leveraged DCIM software to monitor the data.

“We’re placing temperature sensors at the top, middle, and bottom of every rack,” said Marsh. “We’re using Sunbird to measure the individual temperatures so we can see the Delta T. That allows us to raise temperatures which saves us a large amount of money.”

How to Improve Data Center Temperature Management

In addition to deploying temperature sensors and monitoring them with DCIM software, there are several best practices for data center professionals to better manage the temperature in their facility and maintain efficiency.

- Separate hot and cold air. Hot aisle/cold aisle containment is a data center layout configuration in which cabinets are placed so that the front of one cabinet does not face the back of another. This positions racks so that the fronts of each row face each other, creating alternating rows of cold air supply, and hot air return. The separation of hot and cold air helps maximize cooling efficiency by avoiding cooling air that is already cold.

- Seal gaps. To make a highly efficient hot aisle/cold aisle configuration, all air gaps must be sealed within and between cabinets, physical barriers between aisles need to be placed, air from raised floors must be forced into the cold aisles, and there must be exhaust vents in the ceiling above the hot aisles.

- Reduce bypass airflow. Install floor grommets to improve cooling capacity and increase energy efficiency. Placing grommets under the rack allows equipment to be easily installed and upgraded without the need to replace whole panels. It is crucial to be consistent with infrastructure maintenance including cleaning vents and filters, checking for or repairing any air leaks so that everything functions at the highest capacity.

- Consider liquid cooling. For high-density racks that cannot be sufficiently cooled with traditional air cooling, liquid cooling may be a viable option. Liquid cooling offers improved sustainability, efficiency, and reliability while enabling more servers to be placed in existing racks.

Bringing It All Together

As rack densities and temperatures continue to increase in data centers, so do operating costs. Data center professionals must understand the importance of improving cooling efficiency wherever possible to keep costs down, but this must be balanced by the need to keep equipment running safely.

This might seem challenging at first, but professionals should prioritize temperature management because it has a great impact on sustainability, uptime, and capacity planning. Modern DCIM software provides all these capabilities and shows where energy and money can be saved without introducing risk.

Want to see how Sunbird’s modern DCIM software can help you effortlessly monitor your data center temperature? Get your free test drive today.